Algorithmic bias isn’t usually the result of someone intentionally programming a system to discriminate. More often, it creeps in quietly through the data we use to train our models. If your historical hiring data reflects past societal biases, an AI trained on that data will learn to replicate those same unfair patterns. An algorithmic bias audit acts as a diagnostic tool, helping you look under the hood to find and fix these root causes of unfairness. It examines everything from your data sources to your model’s logic, giving you the insights needed to correct course and build technology that promotes equity, not prejudice.

Key Takeaways

- Audits are essential for compliance and trust: New regulations require formal bias audits for HR technology, making them a critical tool for meeting legal demands, managing risk, and proving your commitment to fairness.

- Treat audits as an ongoing process: An effective audit is a repeatable cycle that includes clear planning, data validation, technical fairness testing, and continuous monitoring to ensure your AI remains fair long after the initial review.

- Embed fairness into your entire workflow: True accountability goes beyond the audit itself; it starts with proactive steps like using inclusive design and representative data, and it finishes with taking action on audit findings and establishing a long-term governance plan.

What Is an Algorithmic Bias Audit?

An algorithmic bias audit is a formal review of your AI system to check for unfair or discriminatory outcomes. Think of it as a quality control check, but instead of looking for product defects, you’re searching for hidden biases that could impact real people. In the world of HR, this means ensuring your AI tools for hiring, performance reviews, or promotions are making fair decisions. A proper AI bias audit doesn't just look at the final output; it examines the entire system, from the data it was trained on to the logic it uses, to make sure it operates ethically and legally.

This process is essential for any organization using AI in people-related decisions. It helps you identify potential issues before they become major legal or reputational problems. By proactively auditing your systems, you can build technology that is not only compliant but also genuinely fair, creating more equitable opportunities for everyone.

The Purpose Behind the Audit

The main goal of a bias audit is to find and fix unfairness. Algorithmic bias can creep into AI systems in subtle ways, often because the "data fed to the AI comes from a skewed sample." For example, if your historical hiring data reflects past societal biases, an AI trained on that data might learn to unfairly favor one group over another. This can lead to "discriminatory outcomes against certain groups," even if it was completely unintentional. An audit helps you uncover these hidden patterns before they cause harm.

Ultimately, the purpose is to build better, more trustworthy AI. Effective bias mitigation starts with using diverse and representative training data that reflects the full spectrum of your candidates or employees. An audit verifies that you’re on the right track, giving you the insights needed to correct course and ensure your technology promotes fairness, not prejudice.

What an Audit Actually Involves

So, what actually happens during an audit? It’s a multi-step process that goes far beyond a simple code review. A comprehensive audit involves a deep look at your data sources, model testing, and ongoing performance. Auditors apply best practices, from "diverse data sourcing and algorithm audits to human oversight and cross-validation," to get a complete picture of your AI’s behavior. This process is designed to catch issues that could cause your algorithms to make the wrong predictions about users.

A key part of a modern audit is continuous assessment. Effective systems require "automated monitoring capabilities that continuously assess AI... outputs for discriminatory patterns." This isn’t a one-and-done checkup. Instead, platforms like Warden AI provide ongoing analysis to ensure your system remains fair over time, adapting to new data and evolving regulations. This combination of deep testing and continuous oversight gives you the evidence needed to stand behind your AI’s fairness.

Why Bias Audits Are Critical for HR Tech

When you use AI to make decisions about people's careers, the stakes are incredibly high. An automated tool that screens résumés, assesses candidates, or recommends promotions has a direct impact on someone's livelihood. That’s why simply deploying an AI system and hoping for the best isn’t an option in the HR space. Algorithmic bias audits are not just a technical checkup; they are a fundamental part of responsible AI adoption. For HR tech vendors and the companies that rely on them, these audits are essential for navigating the complex intersection of technology, ethics, and law. They provide a clear framework for ensuring your tools are fair and effective.

Think of it this way: an audit is your quality assurance for fairness. It’s the process that verifies your AI is making decisions based on relevant qualifications, not on hidden biases baked into the data or the model's logic. Without this verification, you're operating with a significant blind spot. A comprehensive audit moves you from uncertainty to confidence, giving you the proof you need to stand behind your technology. Ultimately, conducting a thorough bias audit helps you meet critical legal requirements, build essential trust with everyone who interacts with your technology, and protect your business from serious financial and reputational harm.

Meeting Legal and Compliance Demands

The legal landscape for AI is changing quickly, and HR tech is right in the spotlight. Governments are passing laws to ensure automated employment tools are fair. Regulations like New York City's Local Law 144 and the EU AI Act now require bias audits for certain AI systems used in hiring and employment. Algorithmic bias, whether it comes from unbalanced historical data or other hidden factors, can lead to discriminatory outcomes against protected groups. This creates significant legal risk, as your organization must prove its AI tools follow anti-discrimination laws. A formal bias audit provides the evidence you need to demonstrate compliance and operate confidently within these new legal frameworks.

Building Trust with Your Stakeholders

Trust is the foundation of any successful HR technology. Candidates need to feel they are being evaluated fairly, employees need to trust the tools that shape their career paths, and your clients need assurance that your product is equitable. Bias audits are a powerful way to show your commitment to fairness and transparency. By proactively identifying and addressing potential biases, you can build confidence with every stakeholder. This commitment can be formalized through certifications like the Warden Assured standard, which signals to the market that your AI has been independently verified for fairness and accountability, making trust a core feature of your brand.

Protecting Your Business from Risk

Beyond legal fines, the risks of deploying a biased AI system are substantial. Training an AI on skewed data can lead to unfair decisions, which not only harms individuals but also erodes trust in your technology and damages your company's reputation. A single public incident of bias can result in lost customers, negative press, and difficulty attracting talent. Conducting regular AI bias auditing helps you identify and fix these issues before they cause harm. This proactive approach is a critical part of risk management, safeguarding your organization from potential legal battles and protecting the brand you’ve worked so hard to build.

Where Does Algorithmic Bias in HR Come From?

Algorithmic bias isn’t usually the result of someone intentionally programming a system to discriminate. Instead, it often creeps in quietly through the data we use, the models we design, and even the blind spots of the teams who build them. Understanding where this bias originates is the first step toward creating fairer and more effective HR tools. When an AI system makes a hiring or promotion recommendation, its decision is based on patterns it has learned. If those patterns are rooted in historical inequities or flawed logic, the AI will only amplify those problems, making biased decisions at a scale humans never could.

The challenge is that these sources of bias can be subtle and deeply embedded in the development process. It could be data that reflects decades of societal prejudice, a model that prioritizes the wrong variables, or a development team that lacks the diverse perspectives needed to spot potential issues. It helps you look under the hood to find and fix the root causes of unfairness before they impact real people and expose your business to risk. Let’s break down the three most common sources of algorithmic bias in HR so you know exactly what to look for.

Flawed Training Data and Historical Patterns

Most AI models learn by analyzing vast amounts of data. In HR, this often means looking at past hiring decisions, performance reviews, and promotion records. The problem is that this historical data is a reflection of our own past biases, both conscious and unconscious. If a company has historically hired more men for leadership roles, an AI trained on that data will likely learn to associate male candidates with leadership potential. It’s not making a moral judgment; it’s simply identifying a pattern. This perpetuates a cycle where past discrimination becomes the blueprint for future decisions, as the algorithm learns to favor candidates who look like those who have been successful in the past. This is why simply feeding an AI more data isn't always the answer. You have to ensure the data is representative and scrubbed of these historical imbalances to give the model a fair chance at making equitable decisions.

Gaps in Model Design and Implementation

Even with perfectly balanced data, the way an AI model is designed and implemented can introduce bias. The features an algorithm is told to weigh, the proxies it uses for success, and the lack of human oversight can all lead to discriminatory outcomes. For example, a model might use proxies like a candidate's zip code to predict job performance, which could correlate with race or socioeconomic status and unfairly penalize certain applicants. This is where continuous monitoring becomes essential. An AI model isn't a "set it and forget it" tool. It needs to be regularly tested to see how its decisions impact different demographic groups. Without automated checks and balances, biased patterns can emerge and scale quickly.

A Step-by-Step Look at the Bias Audit Process

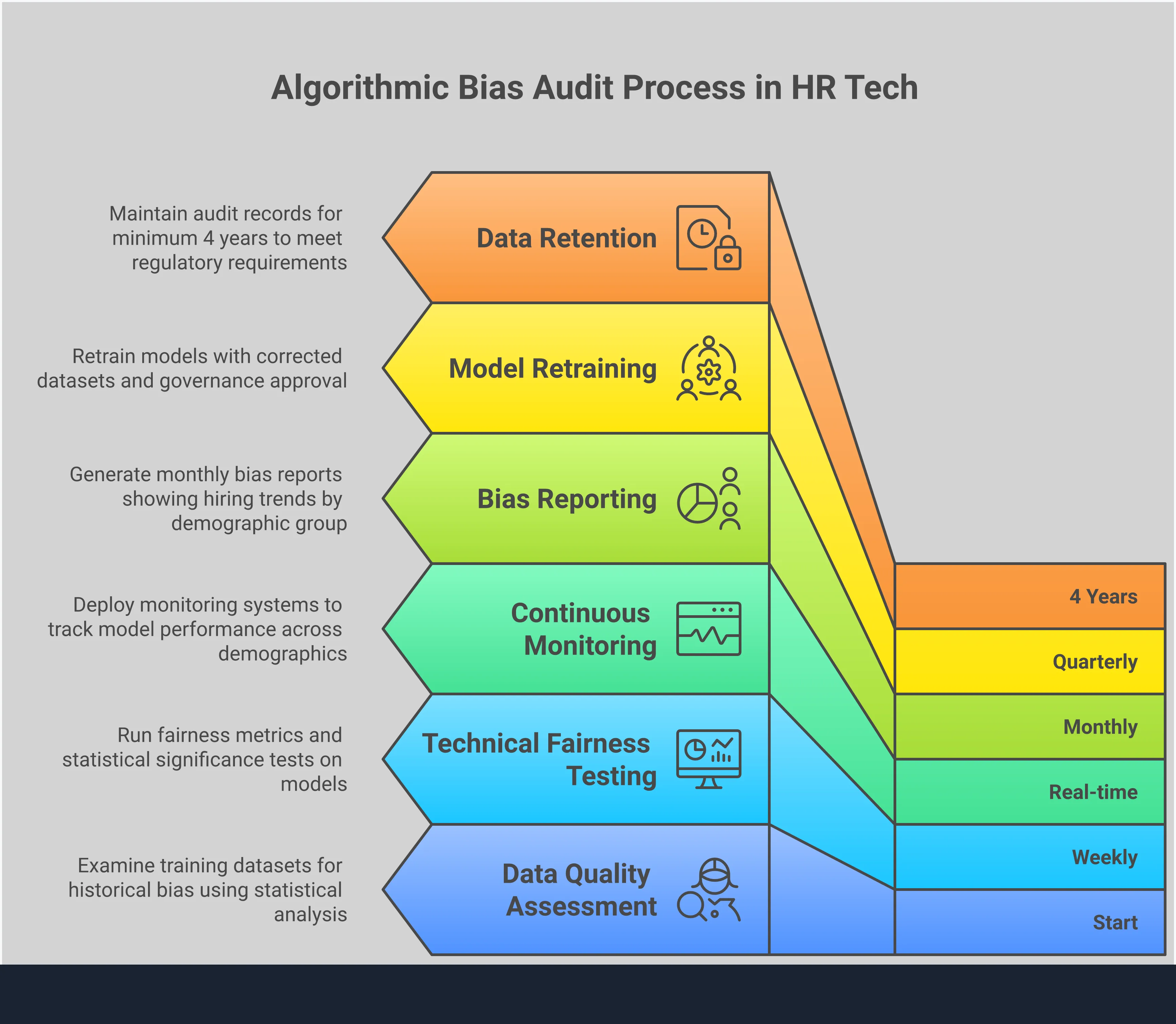

An algorithmic bias audit isn’t a vague, abstract concept. It’s a structured process with clear, actionable stages. Think of it as a systematic health check for your AI, designed to identify and address fairness issues before they become major problems. By following a consistent framework, you can move from simply worrying about bias to actively managing it. This approach ensures your audit is thorough, repeatable, and produces meaningful results that you can act on.

Let’s walk through the four key steps that make up a comprehensive bias audit.

Step 1: Prepare and Plan Your Audit

Before you dive into any data, the first step is to lay a solid foundation. This means defining the scope and goals of your audit. Start by identifying the specific AI system you’ll be evaluating and the exact decision it automates, like screening resumes or assessing candidate skills. As the Ada Lovelace Institute notes, the goal of an audit is to "check if AI systems are biased against certain groups of people," so you’ll also need to specify which protected categories (like race, gender, or age) you'll be assessing for fairness. A clear plan, supported by a comprehensive AI assurance platform, ensures everyone on your team is aligned and the audit stays focused on what matters most.

Step 2: Examine Your Data Quality

Your AI model is only as good as the data it’s trained on. That’s why a critical part of any audit is a deep look at your datasets. Biased or unrepresentative data can seriously distort your model’s outcomes. As one expert points out, "effective bias mitigation starts with diverse, representative training data that accurately reflects the full spectrum of target audiences." In an HR context, this means checking if your data reflects the qualified applicant pool, not just historical hiring patterns that may contain past biases.

Step 3: Test Your Model for Fairness

With your plan in place and your data validated, it’s time to test the model itself. This involves running a series of technical tests to measure its performance across different demographic groups. The goal is to see if the AI produces equitable outcomes for everyone. This isn’t a one-and-done task; effective bias detection requires "automated monitoring capabilities that continuously assess AI... outputs for discriminatory patterns." By using statistical fairness metrics, you can quantify any disparities and pinpoint exactly where your model might be falling short. This continuous testing is especially important for HR tech vendors who need to provide ongoing assurance to their clients.

Step 4: Document and Report the Results

The final step is to translate your findings into a clear, actionable report. This document should detail your methodology, present the results of your fairness testing, and, most importantly, offer concrete recommendations for improvement. The report should "suggest ways to fix any biases... like using more diverse training data or adjusting the AI's settings." This documentation serves as crucial evidence of your due diligence and provides a roadmap for your development team to make necessary fixes. For companies that pass the audit, this report can also be summarized for public transparency, like those listed in the Warden Assured Directory, building trust with users and customers.

How Do You Actually Measure Fairness?

Measuring fairness in AI isn't as simple as getting a single pass or fail score. The concept of "fairness" itself can mean different things depending on the context of your hiring tool or HR platform. Is it about ensuring equal outcomes for all groups, or is it about making sure the tool is equally accurate for everyone? Answering this question is the first step in a meaningful audit. To get a clear picture, you need to use specific statistical tests, often called fairness metrics, to see how your AI system behaves across different demographic groups.

Think of these metrics as different lenses you can use to examine your AI’s performance. Each one reveals a unique aspect of its behavior, and no single metric can tell you the whole story. The goal is to use a combination of these tools to build a comprehensive understanding of your system's impact. This process helps you move from a vague goal of "being fair" to a concrete, data-driven approach for identifying and addressing potential biases. By using these precise measurements, you can pinpoint exactly where your system might be falling short and take targeted action to fix it.

Understanding Key Fairness Metrics

To see if your AI is treating people fairly, you need the right tools. Fairness metrics are specialized tests that check for bias in your model’s outcomes. While there are many, a few common ones provide a great starting point. Demographic Parity, for instance, checks if different groups receive positive outcomes at similar rates. In HR, this might mean asking if your AI sourcing tool recommends male and female candidates in equal proportions. Equalized Odds goes a step further, checking if the model is equally accurate for all groups. Equal Opportunity focuses on ensuring that qualified candidates from different groups have the same chance of being selected. A thorough AI bias audit will use these metrics to find imbalances.

Exploring Different Fairness Approaches

Beyond specific metrics, you need a broader strategy for fairness. This is where AI governance comes in. It’s about creating a set of rules and practices for developing and using AI responsibly. A solid governance framework includes using diverse and representative data to train your models, which helps prevent historical biases from being baked into the system from the start. It also involves implementing continuous bias detection and mitigation tools to catch issues as they arise. Building transparency into your models, so you can explain their decisions, is another key piece. This holistic approach ensures that fairness is a core part of your AI’s entire lifecycle, not just an item on a final checklist.

Assessing Fairness on an Individual Level

Group-level fairness is important, but it can sometimes hide biases that affect specific individuals. That’s why it’s crucial to also look for intersectional bias, where unfairness happens at the intersection of multiple identities, like race and gender. For example, an AI model might show no bias against women as a whole or against a specific racial group as a whole, but it could still consistently penalize women of color. These "layered biases" often have the most significant impact on underrepresented groups. A proper audit digs deeper than broad categories to identify and address these more nuanced forms of discrimination, ensuring your AI is fair for everyone.

Choosing the Right Metrics for Your AI

There’s no single "best" fairness metric for every situation. The right choice depends entirely on your specific goals and the context of your AI tool. For example, the metrics you’d use for a résumé screening tool might be different from those for a performance review system. This is why assembling a diverse team is so important. You need data scientists, diversity and inclusion experts, and legal professionals all at the table to decide what fairness looks like for your organization. Setting clear, measurable goals, like "reduce gender bias in candidate shortlisting by 30%," helps guide the audit process and ensures everyone is working toward the same outcome.

Tools and Methods for an Effective Bias Audit

Conducting a thorough bias audit isn't something you can do with a simple checklist. It requires a specific set of tools and methods designed to uncover and measure fairness in complex AI systems. Think of it as a three-pronged approach: you need automated tools to do the heavy lifting, statistical methods to interpret the results, and independent platforms to validate your findings. Combining these elements gives you a comprehensive and defensible audit process that goes beyond surface-level checks and provides real, actionable insights into how your AI operates. This approach helps you move from simply wanting to be fair to actively proving it.

Automated Testing and Detection Tools

Relying on manual spot-checks to find bias in an AI system is like trying to find a needle in a haystack. Automated tools are essential for doing this work effectively and at scale. These systems are designed to continuously monitor your AI’s outputs, flagging discriminatory patterns as they emerge. This real-time analysis allows you to catch potential issues before they become significant problems. An effective AI assurance platform integrates this continuous testing with mechanisms for human oversight, which is crucial for handling complex or ethically ambiguous situations that an algorithm alone can't resolve. This combination of machine speed and human judgment gives you a powerful way to maintain fairness over the entire lifecycle of your AI.

Statistical Analysis and Fairness Metrics

At its core, a bias audit is a data-driven process. You need robust statistical methods to analyze how your AI model treats different groups of people. This involves applying specific fairness metrics to your model’s outputs to see if there are any statistically significant disparities between demographic groups, such as race, gender, or age. By digging into the data, you can identify whether your AI is producing skewed results. This process of AI bias auditing relies on best practices like cross-validation and testing with diverse datasets to ensure the insights you gather are both accurate and actionable. It’s how you move from a gut feeling about fairness to a concrete, evidence-based understanding of your model’s performance.

Third-Party Audit and Governance Platforms

While internal testing is a great first step, bringing in a third party adds a critical layer of objectivity and credibility to your audit. Algorithmic bias can stem from historical data imbalances or subtle correlations, leading to discriminatory outcomes that an internal team might overlook. Independent audit and governance platforms provide the unbiased perspective needed to ensure true fairness and compliance. Using a dedicated platform helps operationalize AI regulations and provides a structured framework for ongoing governance. Achieving a standard like Warden Assured demonstrates to customers, regulators, and candidates that your commitment to fairness has been independently verified, building a foundation of trust.

Common Challenges in Algorithmic Bias Audits

While algorithmic bias audits are essential, they aren't always a walk in the park. The process comes with its own set of hurdles that can feel daunting, especially when you’re just getting started. Understanding these common challenges ahead of time helps you prepare for them, ensuring your audit is as smooth and effective as possible. From technical roadblocks to team alignment, here are the key obstacles you might face.

Technical Complexity and Resource Limits

Conducting a thorough bias audit requires more than just a simple software scan. It demands a sophisticated mix of automated tools and human expertise. Effective systems need to continuously monitor AI outputs for discriminatory patterns, but they also need human oversight for nuanced ethical judgments. For many organizations, building this capability in-house is a heavy lift, requiring specialized talent and significant resources.

Data Quality and Availability Hurdles

The principle of "garbage in, garbage out" is especially true for AI systems. If the data used to train your model is flawed or unrepresentative, the model will inevitably produce biased outcomes. In HR, historical data often reflects past societal biases, which can be learned and amplified by an algorithm. Sourcing clean, balanced, and comprehensive datasets is one of the biggest challenges in the audit process. A proper AI bias audit must begin with a deep examination of your data sources to identify and correct these foundational issues before they skew your results.

Balancing Costs and Team Coordination

Let’s be practical: a comprehensive audit requires both time and money. There are costs associated with tools, specialized personnel, and potential third-party validation. Beyond the budget, an audit demands seamless coordination across multiple departments. Your technical teams, HR professionals, and legal experts all need to be aligned and working together. Getting everyone on the same page, speaking the same language, and allocating the necessary resources can be a major organizational challenge. Coordinating these efforts is a common pain point for enterprise teams trying to operationalize AI governance effectively.

Best Practices for a Successful Bias Audit

Conducting a successful algorithmic bias audit is about more than just running a few tests and checking a box. It’s a strategic process that requires careful planning, the right people, and a commitment to ongoing improvement. Think of it as building a strong foundation for responsible AI. When you get it right, you not only meet compliance requirements but also create better, fairer products that build trust with your users and protect your organization from risk. A one-off audit might catch existing issues, but a truly effective approach embeds fairness into your operations from the start.

This means moving from a reactive mindset to a proactive one. Instead of waiting for a problem to surface, you actively look for potential issues and build systems to prevent them. The following best practices will help you create a robust framework for your bias audits. By assembling a diverse team, partnering with outside experts, implementing continuous monitoring, and focusing on inclusive design, you can ensure your AI systems are not only powerful but also principled. These steps are essential for any HR leader or tech vendor who is serious about deploying AI responsibly and ethically.

Partner with Independent Experts for Transparency

Even with a great internal team, it’s easy to have blind spots. Partnering with an independent, third-party auditor brings a fresh, objective perspective to the process. These experts aren't influenced by internal politics or development history; their only goal is to provide an unbiased assessment of your AI system. This external validation is incredibly valuable for building trust with customers, partners, and regulators.

Working with an outside firm demonstrates a genuine commitment to transparency and accountability. It shows you’re willing to have your work scrutinized by professionals who specialize in fairness and compliance. This collaboration not only enhances the credibility of your audit but also provides you with expert guidance on best practices.

Implement a Continuous Monitoring System

Bias isn't a static problem you can solve just once. An AI model that is fair today could develop biases tomorrow as it processes new data or as societal patterns shift. That’s why a one-and-done audit is never enough. The most effective approach is to implement a system for continuous monitoring that regularly checks your AI’s performance for fairness issues.

Think of it as a permanent watchdog for your algorithm. This system can automatically flag anomalies and alert your team to potential biases before they become significant problems. By making monitoring a part of your regular operations, you can maintain compliance over the long term and ensure your AI tools remain fair and reliable throughout their lifecycle. This proactive stance is key to managing risk and maintaining stakeholder trust.

Use Representative Data and Inclusive Design

The fairness of your AI system starts with the data it’s trained on. If your training data is skewed or fails to represent the diverse populations your tool will impact, bias is almost inevitable. A key best practice is to ensure your datasets are balanced and accurately reflect the real world. This might involve actively sourcing data from underrepresented groups or using techniques to correct for historical imbalances.

Beyond the data, you should also adopt inclusive design principles from the very beginning of the development process. This means thinking about fairness, accessibility, and potential negative impacts on different groups at every stage, not just as an afterthought during an audit. By building with intention and considering a wide range of human experiences, you can prevent many biases from ever taking root in the first place.

The Laws Driving Algorithmic Audits

The conversation around AI in HR is no longer just about innovation; it’s about accountability. Governments around the world are establishing rules to ensure that the AI tools used in hiring and employment are fair and transparent. These regulations are not abstract guidelines. They are concrete legal requirements that carry significant penalties for non-compliance, making algorithmic bias audits an essential practice for any company developing or using HR technology.

This shift toward regulation means that proving your AI is fair is now a core business function. For HR tech vendors, it’s about market access and building a trustworthy product that customers can rely on. For employers and staffing agencies, it’s about mitigating legal risk and upholding a commitment to equitable hiring practices. Understanding the key laws shaping this landscape is the first step toward building a compliant and responsible AI strategy. From New York City’s pioneering local law to the European Union’s sweeping AI Act, regulators are setting a new, higher standard for what it means to use AI responsibly in the workplace. Ignoring these developments isn't just risky; it's a direct threat to your business's sustainability and reputation.

Understanding NYC Local Law 144 and State Rules

New York City’s Local Law 144 set a major precedent in the United States for regulating AI in hiring. The law requires employers using “automated employment decision tools,” like resume screeners or video interview analysis software, to conduct annual independent bias audits. It’s not enough to just run the audit; the results must be published on the company’s website and shared with candidates. This focus on transparency is a game-changer, putting the responsibility on companies to prove their tools are fair.

While NYC was the first, it certainly won’t be the last. Other states and cities are developing similar legislation, creating a complex patchwork of compliance requirements. The core principle remains the same: if you use AI to make employment decisions, you need a process for AI bias auditing to demonstrate fairness and avoid discrimination.

Getting Ready for the EU AI Act

The European Union’s AI Act is one of the most comprehensive pieces of AI regulation in the world. It takes a risk-based approach, and many AI systems used in HR, from recruiting to performance management, are classified as "high-risk." This designation comes with strict obligations, including rigorous testing, clear documentation, and human oversight. The goal is to ensure that AI systems are safe, transparent, and do not perpetuate harmful biases.

For companies operating in the EU, the stakes are incredibly high. Failing to comply can lead to massive fines, potentially costing millions of euros. The Act requires businesses to proactively implement measures to identify and mitigate bias throughout the AI lifecycle. For HR technology vendors, aligning systems with these rules isn’t just about avoiding penalties; it’s about securing your ability to operate in a major global market.

How to Prepare for Future Regulations

Instead of reacting to each new law as it appears, the smart approach is to build a proactive and adaptable governance strategy. The global trend is clear: regulators are demanding greater fairness, accountability, and transparency in AI. Waiting for a law to pass in your specific jurisdiction is a risky strategy that leaves you playing catch-up. A better path is to adopt a universal standard of trust and compliance from the start.

You can prepare by embedding fairness into your development and procurement processes now. This means conducting regular bias audits, maintaining clear documentation, and prioritizing transparency with all stakeholders. By committing to a high standard of AI assurance, you not only meet current requirements but also build a resilient framework that can adapt to future regulations, wherever they may emerge.

What to Do After the Audit Is Complete

Completing an algorithmic bias audit is a huge step, but it’s not the finish line. Think of the audit report as your roadmap. It shows you where the problems are and gives you the insights needed to fix them. The real work begins now, turning those findings into a stronger, fairer AI system. This is your opportunity to build a continuous cycle of improvement that protects your users and your business. By taking clear, deliberate action, you can move from simply identifying bias to actively preventing it.

Take Immediate Steps to Correct Bias

Once you have your audit results, the first priority is to address the issues head-on. This usually starts with a close look at your training data. If the audit reveals bias against a particular group, it’s often because that group was underrepresented or misrepresented in the data used to train the model. The most effective fix is to retrain your algorithm with a more diverse and representative dataset. You might also need to adjust model features or thresholds to ensure fairer outcomes. The goal is to implement targeted corrections that directly resolve the biases uncovered in your AI bias auditing report.

Establish Continuous Monitoring and Feedback

An audit provides a snapshot in time, but AI models are not static. They can drift as they process new data, causing biases to re-emerge unexpectedly. That’s why continuous monitoring is so important. Set up automated systems that constantly check your AI’s outputs for discriminatory patterns and alert you to potential issues in real time. This proactive approach should be paired with a human feedback loop. Having experts review complex or sensitive cases helps you catch nuances the machine might miss and ensures your system remains fair and effective over its entire lifecycle.

Build a Long-Term Governance Framework

Fixing immediate issues is crucial, but a long-term strategy prevents them from happening again. This is where a strong AI governance framework comes in. It’s about creating clear policies, roles, and responsibilities for how AI is developed, deployed, and maintained in your organization. Your framework should define your company’s standards for fairness and transparency and outline the steps for ensuring compliance.

Maintain Transparency with Stakeholders

Building trust in your AI systems requires transparency. Your stakeholders, including customers, employees, and regulators, need to know that you are taking fairness seriously. After an audit, communicate the results and the steps you’re taking to address them. You don’t have to share every technical detail, but a clear summary of your commitment and actions goes a long way. Earning a certification or being listed in a public directory of compliant vendors is an excellent way to signal your dedication. The Warden Assured Directory, for example, allows companies to publicly showcase their certified, fair, and compliant AI systems.

Related Articles

Algorithmic Bias Audit FAQs

Is a bias audit just a one-time check?

Not at all. Think of fairness as an ongoing practice, not a one-time event. An initial audit gives you a crucial baseline, but AI models can change over time as they encounter new data. The best approach is to establish a system for continuous monitoring. This allows you to keep a constant pulse on your AI’s performance and catch any fairness issues that might pop up later, ensuring your system remains compliant and equitable long after the initial audit is complete.

My company isn't in a location with specific AI laws like NYC. Why should we conduct an audit?

While laws like NYC’s Local Law 144 are major drivers, they aren't the only reason to conduct an audit. Proactively auditing your AI is a smart business decision, regardless of your location. It helps you manage risk by identifying potential legal issues before they become problems. More importantly, it’s about building trust. Demonstrating that your technology is fair and has been independently verified helps you earn the confidence of your customers, candidates, and employees.

What happens if an audit finds bias in our AI? Are we in trouble?

Finding bias doesn't mean your product is a failure; it means the audit is working. The purpose of an audit is to give you a roadmap for improvement. The findings will show you exactly where the problems are, whether in your data or your model, so you can take targeted steps to fix them. It’s a constructive process that helps you build a stronger, fairer, and more defensible product.

Can we just do this ourselves, or do we need a third party?

While internal reviews are a good start, bringing in an independent third party adds a vital layer of objectivity and credibility. An external expert can spot blind spots your internal team might miss and provides an unbiased assessment that holds more weight with regulators and customers. This independent validation is what builds true market trust and shows you are genuinely committed to fairness and transparency.

How do we even begin if we don't have a dedicated AI ethics team?

You don't need a large, dedicated team to get started. The key is to have a structured process and the right tools. This is where an AI assurance platform can be incredibly helpful. It provides a guided framework for the entire audit process, from data analysis and model testing to reporting and continuous monitoring. It gives you the technical infrastructure and expert guidance needed to conduct a thorough audit, even without in-house specialists.